The Rise of Insider AI Shadow IT: How Employee‑Created Automations Introduce New Compliance Risks

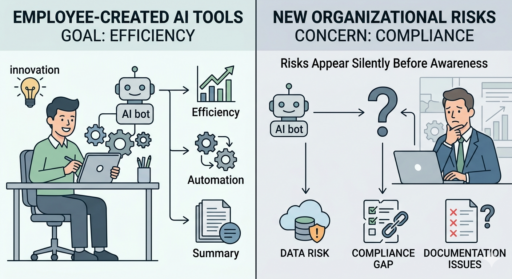

Artificial intelligence has become a central focus in compliance discussions, with organizations increasingly concerned about external threats such as deepfake fraud, AI‑enabled phishing, and synthetic identities. These risks deserve attention—but they represent only part of the picture. A less visible but rapidly growing challenge is unfolding inside organizations: employees are independently deploying or creating AI‑powered tools and automations to streamline their own work.

These insider-built AI tools are often born from good intentions. Employees want to improve efficiency, eliminate repetitive tasks, or “future‑proof” their workflow. But when these tools operate outside formal oversight, they can introduce new—and sometimes significant—compliance, security, documentation, and ethical risks.

The goal here isn’t to portray employee innovation as dangerous. In fact, employee‑led AI adoption often reflects initiative, curiosity, and a desire to contribute. Instead, the challenge is that most organizations have not yet built governance structures capable of keeping up with how quickly employees can now create or automate things. As a result, risks appear quietly, long before leadership becomes aware of them.

Employee‑Driven AI: A Powerful New Category of Shadow IT

A decade ago, “shadow IT” typically meant someone installing unapproved software or using a personal device for work purposes. Today, AI shadow IT fundamentally changes the landscape: employees can build powerful automations using nothing more than a website, a plugin, or a no‑code integration platform.

Employees are now commonly using AI shadow IT to:

- Summarize emails, documents, or meeting transcripts

- Rephrase or draft communications

- Generate spreadsheet formulas or data transformations

- Automate task sequences in workflow tools

- Build simple bots or scripts to assist with approvals or categorization

- Connect AI agents to internal systems through no‑code integration tools

These behaviors are not inherently improper—and often they help the organization. But because they emerge informally and spread quietly, leaders, compliance teams, and IT departments often discover them well after the tools have influenced operational decisions, documentation, or customer interactions.

This creates an environment where risk is not intentional—it’s incidental.

Understanding the Real Risks: Less About Training Data, More About Usage Patterns

A common misconception is that AI risks primarily revolve around language models “training on user data.” It’s important to be precise here: Most major enterprise AI providers—including Microsoft, OpenAI’s enterprise tiers, and Anthropic—explicitly do not use customer prompts to train their foundational models. That reality significantly reduces one class of risk.

However, this does not eliminate other meaningful risks that arise from:

- Non‑enterprise AI tools or free consumer versions

- Browser extensions that process data unpredictably

- Third‑party integrations that have unclear data‑handling practices

- Local scripts and automations created without security review

- Copy‑and‑paste behavior that exposes sensitive content regardless of backend policies

- Misuse of otherwise secure enterprise AI tools, such as entering data that should never have been input in the first place

So while “model training risk” should not be overstated, data exposure risk remains significant when employees interact with tools that were never designed—or approved—for regulated workflows.

Where Good Intentions Create Compliance Challenges

Even when employees are trying to help, insider-created AI tools can cause problems in several ways:

- Unintentional Exposure of Sensitive Information

Employees may paste or upload confidential information—including HR files, customer contracts, financial figures, or investigative notes—simply to get a quick summary or rewrite. Even with secure enterprise AI, the act of copying data into a tool may violate:

- Company policies

- Contracts

- Government regulations

- Privacy expectations

It’s not just about where the data goes—it’s about whether the employee was authorized to move it there.

- Automated Actions Without Oversight

When employees build AI‑powered scripts or workflows that automatically send emails, categorize reports, or flag issues, the organization may lose visibility into:

- How decisions are being made

- Whether the bot introduces biased or inconsistent behavior

- How errors will be detected

- Whether documentation still meets regulatory requirements

The issue isn’t that AI “cannot understand nuance”; modern models do handle nuance well in many contexts. The concern is that compliance decisions often require context the model was never given—and employees may assume the AI is more authoritative than it is.

- Documentation Integrity Risks

In investigations, audits, or disciplinary situations, precision matters. AI‑generated summaries, reframing, or cleaned‑up documentation can unintentionally:

- Remove qualifiers

- Alter tone

- Over‑generalize statements

- Introduce confident but incorrect interpretations

This can undermine chain‑of‑custody evidence or distort key facts, even if the employee intended only to improve clarity.

- Blurred Responsibility

If an AI tool drafts or performs work on behalf of an employee, who is accountable for errors?

Without clear policy, this becomes a gray zone.

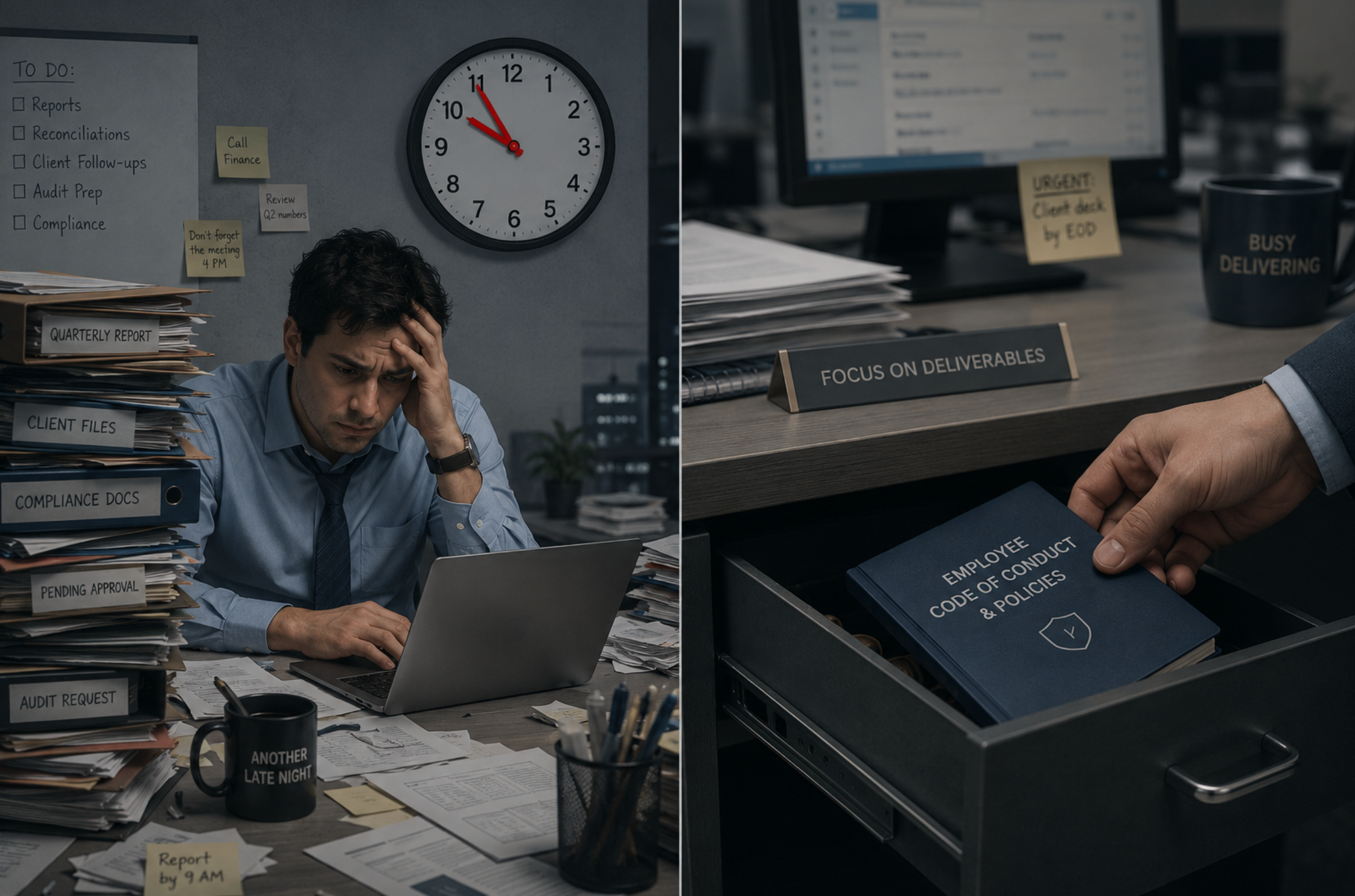

Why These Issues Are Often Invisible to Leadership

Unlike traditional shadow IT, AI shadow IT tools don’t always require installations or administrator permissions. Many operate entirely in the browser or inside communication platforms employees already use. As a result:

- Risks spread silently

- No logs or alerts are triggered

- Small issues accumulate before anyone reports them

This is why speak‑up culture is essential. Not because employees are behaving badly, but because their ingenuity can outpace policies.

How Reporting Hotlines Help Catch Early Signs of AI‑Related Risk

Red Flag Reporting has long emphasized that employees closest to the work see the earliest signals of risk. In the context of insider AI use, this becomes especially important.

Hotlines allow employees to report things like:

- “A coworker built a bot that is sending automated approvals.”

- “Someone is pasting internal case notes into a consumer AI site.”

- “Our team is using an extension that summarizes confidential documents.”

- “A script is changing how we categorize incidents.”

These are subtle issues—not obvious misconduct—and employees often hesitate to bring them up unless they know they can do so safely and anonymously.

A hotline creates exactly that environment.

Protecting Your Workplace: Five Practical Steps

- Create a Clear, Nuanced AI Use Policy

Avoid blanket bans—they push employees toward secrecy.

Provide:

- Approved tools

- Prohibited data types

- Guidelines for documentation

- Expectations for reporting questionable use

- Offer Regular AI Awareness Training

Employees must understand the difference between enterprise‑grade and consumer‑grade AI, and why this distinction matters.

- Encourage Early Reporting

Make sure employees know:

- They will not be punished for good‑faith reporting

- Uncertainty is reportable

- AI‑related concerns qualify for hotline use

- Integrate IT, HR, Compliance, Legal, and Security

AI risks cut across all departments. Shared ownership ensures nothing falls through the cracks.

- Monitor for Patterns

Use case management data to identify emerging themes and prevent small missteps from turning into systemic risks.

Conclusion: AI Shadow IT Empowers Employees — Governance Must Keep Pace

AI shadow IT democratizes problem‑solving. Employees can now build tools that would have required an engineering team just a few years ago. That capability is powerful—and overwhelmingly positive—when guided by strong governance.

The challenge for organizations is to:

- Provide safe, approved AI pathways

- Build a culture where employees can speak up early

- Treat insider AI use not as misconduct, but as an emerging area requiring clarity

With the right reporting structures—especially an independent, trusted hotline—organizations can harness employee innovation while staying protected.

How can we help? Contact us.

Reach Us

Red Flag Reporting

P.O. Box 4230, Akron, Ohio 44321

Tel: 877-676-6551

Fax: 330-572-8146